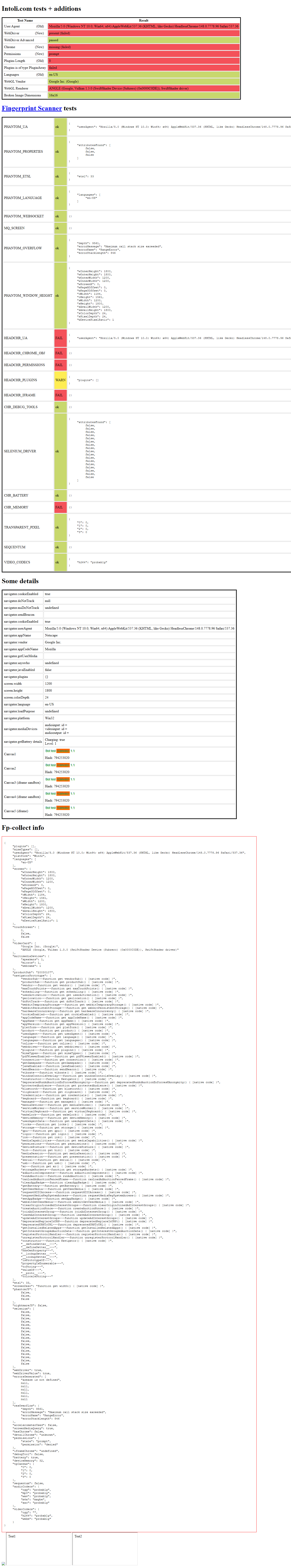

Canvas charts (Chart.js, ECharts)

· bar chart value readingWhat the test does

// Renders a Chart.js bar chart with values [40, 45, 60, 50, 35]

// for Mon..Fri. The user expects to see "Wednesday" and "60".

await expect(page.getByText('Wednesday', { exact: true })).toBeVisible();

await expect(page.getByText('60', { exact: true })).toBeVisible();What Playwright sees

Error: expect(locator).toBeVisible() failed

Locator: getByText('Wednesday', { exact: true })

Expected: visible

Error: element(s) not found

Page snapshot:

- heading "Weekly hours" [level=1] Plain-English explanation (click to expand)

Both Chart.js and Apache ECharts render bar charts by drawing pixels into an HTML <canvas> element. The axis labels ("Monday", "Wednesday") and data labels ("60") are part of that picture — they are not text nodes anywhere in the DOM. A human reading the chart sees them; Playwright querying the DOM does not. The page snapshot shown to Playwright contains only the <h1>, because that is everything the DOM actually has.

A visual agent looks at the rendered screenshot the same way a human does. Optical character recognition (or a multimodal model) extracts "Wednesday" and "60" from pixels. The assertion can be expressed in user terms — "the Wednesday bar shows 60 hours" — and verified against what is on screen.

Switching the same chart to Highcharts (which renders to SVG) makes Playwright work normally — SVG <text> nodes are real DOM. The split is canvas vs SVG, not "charts in general".

Reproduce with another automation tool

Target https://echarts.apache.org/examples/en/editor.html?c=bar-simple

Goal — Read the value of one bar (e.g., "Wed") from the rendered chart, by selector only — no screenshot, no OCR.

- Open the URL. The right pane shows a live ECharts bar chart with weekday labels and seven bars.

- Wait until the chart finishes rendering.

- Without taking a screenshot, locate the DOM node that contains the visible text "Wed" or any of the bar values (120, 200, 150, 80, 70, 110, 130).

- Read the value associated with the "Wed" bar from the DOM.

Expected — No DOM node contains the label "Wed" or the numeric values — they are all painted into <canvas>. A scripted tool fails the lookup; a visual tool reads them straight from the rendered image.